X

Minerva

Year

2025

Client

Minerva

Timeframe

2 weeks

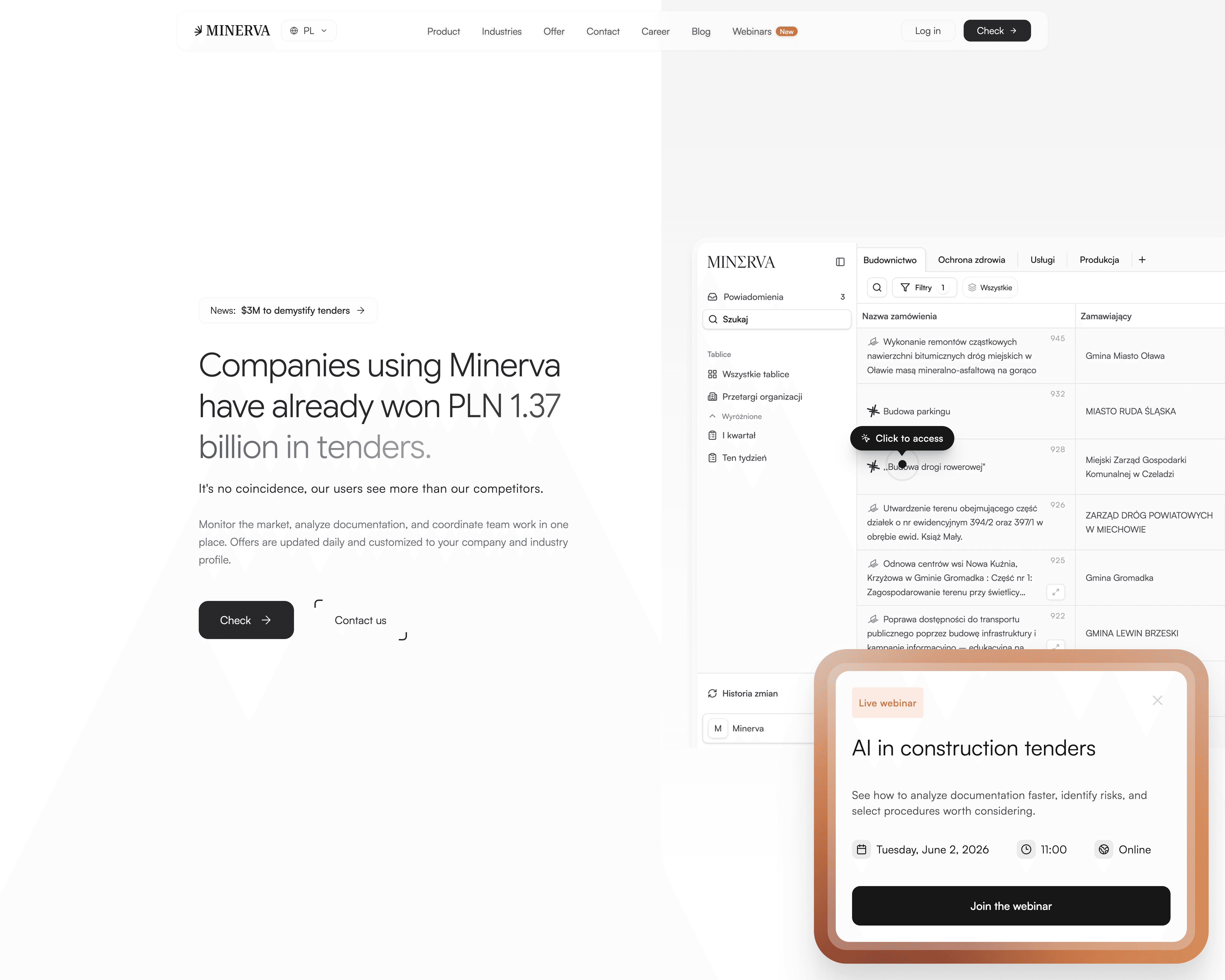

Minerva came to us with a product challenge that went far beyond interface polish.

At the core of the platform is an AI-powered tender search that depends on one thing: learning the business well enough to surface the right opportunities. But in practice, that learning process was too heavy, too fragmented and too dependent on users completing setup steps they often skipped. The goal of this project was to redesign the experience so Minerva could learn more naturally from user behaviour, improve tender-match accuracy over time and make the platform feel more trustworthy from day one.

The challenge

The problem was not only that users were getting irrelevant tenders. The deeper issue was that the system was not learning in a way that felt natural, visible or easy to trust.

Minerva relied heavily on onboarding inputs, keyword setup and relevance feedback to improve search accuracy. But clients often completed their profiles only partially, skipped important configuration steps and rarely came back to refine the setup later. As a result, the system either missed valuable tenders or returned too many irrelevant ones — which weakened trust and reduced long-term engagement.

There was also a perception gap. Users often did not review disqualified tenders at all and simply assumed the system had failed to find them. On top of that, the platform made the feedback process feel too mechanical: users had to jump between views, interpret scattered signals and repeatedly “teach” the system without enough guidance or context. That same trust-through-clarity challenge also appeared in Lokero, although there it was solved inside a marketplace flow rather than an AI-assisted enterprise product.

The real challenge, then, was bigger than setup. It was designing a system that could learn continuously and naturally as clients worked — more like a new analyst observing decisions, absorbing context, asking for corrections and improving over time.

The solution

We approached Minerva as a self-improving product experience, not just a search interface.

The design direction focused on making learning feel embedded into the workflow instead of front-loaded into long onboarding or configuration forms. Rather than forcing users to do all the thinking upfront, the system was redesigned to collect better signals continuously — through guided feedback, clearer evaluation moments and more visible reasoning behind what the platform considered relevant or irrelevant.

To support that, we reworked the evaluation experience around clarity and explainability. Users can now understand why a tender appears in the feed, why another one was rejected and where the system is uncertain. Instead of silently guessing, Minerva can ask for lightweight corrections in the moment, so the product improves through small interactions rather than heavy setup. This direction is also visible on the live case page, where the redesign is described as a structured decision panel, plain-language reasoning and an assistant-like learning flow.

We also redesigned the tender list itself to make high-volume evaluation faster. The interface surfaces the most important information earlier — making it easier to scan, compare and decide without opening every tender one by one. The result is a system that feels less like a static search tool and more like an active decision-support layer.

A different form of this “make complex decisions easier inside the product” challenge also showed up in modue, but there the focus was ecommerce choice architecture rather than enterprise recommendation logic.

What we delivered

A redesigned product experience focused on helping Minerva learn more naturally and generate more accurate tender matches over time.

This included:

a redesigned setup direction that reduces dependence on heavy onboarding

a more natural feedback loop for teaching the system what is and isn’t relevant

clearer explanations of why tenders are surfaced or rejected

a structured decision interface that supports faster and more confident evaluation

improved visibility into uncertain or borderline cases where user input matters most

a redesigned tender list built for quicker scanning and lower cognitive load

a more assistant-like product direction, where the system improves continuously through interaction rather than relying only on static initial setup

On the live case page, the public framing already reflects these same ideas: clarity, speed, explainability and a decision-support experience that reduces friction while improving trust in the recommendations.

Final effect

The final result is a product direction that makes Minerva feel more teachable, more transparent and much easier to trust.

Instead of asking users to do heavy setup work upfront and hope the system gets better later, the redesigned experience creates a more natural loop: the platform learns through interaction, explains its reasoning more clearly and gives users better visibility into how their feedback shapes future results. That has direct implications for accuracy, engagement and retention — because when users trust the feed more, they are more likely to keep using it.

This is also what makes the project strategically valuable. It does not just improve interface quality. It improves how the product learns, how users understand that learning and how the entire recommendation experience supports long-term product adoption.

If you want to see how we think about clarity, friction and decision-making in digital products more broadly, start with 48h Audit.